AI Sales Enablement Content Generation with Codex [2026]

Your sales enablement team is drowning. New product launches, competitive updates, pricing changes, messaging pivots—and somehow they're supposed to keep 50 reps trained on all of it.

Here's the problem: Sales enablement doesn't scale with headcount. You can't hire your way out of content debt.

But AI can help. In this guide, I'll show you how to use OpenAI Codex (GPT-5.3) to automatically generate training materials, battle cards, and playbooks—so your enablement team can focus on strategy, not formatting.

The Sales Enablement Content Crisis

Let's be honest about the state of sales enablement:

- Battle cards are 6 months out of date

- Training materials reference features that no longer exist

- Playbooks assume a sales motion you abandoned last quarter

- Competitive intel is scattered across 47 Slack messages and 12 Google Docs

Your reps are flying blind because enablement can't keep up.

Average sales enablement team: 2 people supporting 50+ reps. Content pieces to maintain: 200+ documents. Update frequency needed: Weekly. Update frequency actual: "When we get to it."

Why Codex for Enablement

OpenAI's Codex (GPT-5.3, released February 5, 2026) is the most capable agentic model for this work because:

- Code generation — Create interactive training modules, not just docs

- Multi-file operations — Update 50 battle cards in one run

- Mid-turn steering — Redirect generation based on feedback

- Tool use — Pull from CRM, product docs, Slack, and more

The killer feature: Codex can read your actual product, pull competitive intel, and synthesize training materials that are always current.

Building Your AI Enablement Engine

Step 1: Define Your Content Types

First, map what you need to generate:

const contentTypes = {

battleCards: {

structure: [

'competitor_overview',

'strengths',

'weaknesses',

'our_differentiators',

'landmines_to_plant',

'objection_handlers',

'customer_wins'

],

updateTrigger: ['competitor_news', 'product_update', 'win_loss_feedback'],

format: 'notion_page'

},

playbooks: {

structure: [

'situation_overview',

'target_persona',

'key_messages',

'discovery_questions',

'demo_flow',

'objection_responses',

'next_steps'

],

updateTrigger: ['messaging_change', 'new_feature', 'icp_shift'],

format: 'google_doc'

},

trainingModules: {

structure: [

'learning_objectives',

'key_concepts',

'real_examples',

'practice_scenarios',

'quiz_questions',

'certification_criteria'

],

updateTrigger: ['product_launch', 'process_change', 'quarterly'],

format: 'interactive_html'

},

oneSheets: {

structure: [

'headline_value_prop',

'key_benefits',

'proof_points',

'call_to_action'

],

updateTrigger: ['campaign_launch', 'messaging_refresh'],

format: 'figma_template'

}

};

Step 2: Set Up Codex with Context

Give Codex access to your source truth:

// enablement-agent.js

const { Codex } = require('@openai/codex');

const enablementAgent = new Codex({

model: 'gpt-5.3-codex',

tools: ['file_read', 'file_write', 'web_search', 'api_call'],

context: `You are a Sales Enablement Content Generator.

Your job is to create and update sales training materials that help reps win more deals.

SOURCES OF TRUTH:

- Product documentation: /docs/product/

- Competitive intel: /docs/competitive/

- Win/loss reports: /crm/win-loss/

- Customer stories: /docs/customers/

- Pricing: /docs/pricing/

CONTENT PRINCIPLES:

1. Be specific and actionable—no generic fluff

2. Include real customer examples when available

3. Anticipate rep questions and objections

4. Make content scannable (bullets > paragraphs)

5. Include talk tracks reps can use verbatim

VOICE:

- Confident but not arrogant

- Data-driven with proof points

- Conversational, not corporate`

});

Step 3: Battle Card Generator

Generate battle cards that actually get used:

async function generateBattleCard(competitor) {

// Gather fresh intel

const competitorData = await gatherCompetitorIntel(competitor);

const recentWins = await getWinsAgainst(competitor);

const recentLosses = await getLossesTo(competitor);

const battleCard = await enablementAgent.run(`

Generate a sales battle card for ${competitor}.

COMPETITOR DATA:

${JSON.stringify(competitorData, null, 2)}

RECENT WINS AGAINST THEM (last 90 days):

${JSON.stringify(recentWins, null, 2)}

RECENT LOSSES TO THEM (last 90 days):

${JSON.stringify(recentLosses, null, 2)}

Generate the battle card with these sections:

## Quick Stats

- Founded, HQ, funding, employee count

- Key customers

- Pricing (if known)

## Where They Win

- Their genuine strengths (be honest)

- Types of deals they tend to win

## Where We Win

- Our differentiators vs them

- Types of deals we tend to win

## Landmines to Plant

- Questions that expose their weaknesses

- Features to emphasize in demos

## Objection Handlers

For each common objection, provide:

- Objection

- Response framework

- Proof point to cite

## Talk Track

A 30-second positioning statement when ${competitor} comes up

## Customer Wins

Specific stories of customers who chose us over them

`);

return battleCard;

}

Step 4: Training Module Generator

Create interactive training that reps actually complete:

async function generateTrainingModule(topic, config) {

const module = await enablementAgent.run(`

Create an interactive sales training module on: ${topic}

Target audience: ${config.audience}

Estimated completion time: ${config.duration} minutes

Prerequisites: ${config.prerequisites.join(', ')}

Generate as HTML with embedded interactivity:

1. LEARNING OBJECTIVES

- What will they be able to do after this module?

2. CORE CONTENT

- Break into 3-5 digestible sections

- Include real examples from our customer base

- Add "Try This" interactive elements

3. SCENARIO PRACTICE

- 3 realistic scenarios they might encounter

- Multiple choice responses with feedback

- Explain why correct answers work

4. TALK TRACK BUILDER

- Interactive section where they build their own pitch

- Template with fill-in-the-blank sections

- Example completed version

5. QUIZ

- 5 questions testing key concepts

- Mix of multiple choice and short answer

- Passing score: 80%

6. RESOURCES

- Links to related content

- Cheat sheet for quick reference

- Who to ask for help

Output as a complete, styled HTML document that can be hosted in our LMS.

`);

return module;

}

Using Mid-Turn Steering

Codex's mid-turn steering lets you refine generation in real-time:

async function createPlaybookWithFeedback(playbookType) {

// Start generation

const session = await enablementAgent.startTask(`

Create a sales playbook for: ${playbookType}

Start with discovery questions.

`);

// Review first section

const discoverySection = await session.checkpoint();

console.log('Discovery section:', discoverySection);

// Steer based on review

await session.steer(`

Good start, but:

- Add more questions about budget timing

- Include a question about competitive evaluation

- Make questions more open-ended

`);

// Continue with refinements

await session.continue();

// Final output

return session.complete();

}

Automation: Always-Current Content

Set up automated updates so content never goes stale:

// content-updater.js

// Trigger: Competitor releases new feature

async function onCompetitorUpdate(competitor, update) {

const affectedContent = await findContentMentioning(competitor);

for (const content of affectedContent) {

const updated = await enablementAgent.run(`

Update this content to reflect new competitor information:

ORIGINAL CONTENT:

${content.body}

NEW INFORMATION:

${competitor} just ${update.summary}

Details: ${update.details}

Update the content to:

1. Reflect any new competitive threats

2. Update our positioning if needed

3. Add new objection handlers if relevant

4. Keep the same format and structure

Return only the updated content.

`);

await saveContent(content.id, updated);

await notifyEnablement(`Updated ${content.title} based on ${competitor} news`);

}

}

// Trigger: Product team ships feature

async function onProductUpdate(feature) {

// Update relevant playbooks

const playbooks = await findPlaybooksForFeature(feature.area);

for (const playbook of playbooks) {

await enablementAgent.run(`

Add information about our new feature to this playbook:

NEW FEATURE: ${feature.name}

DESCRIPTION: ${feature.description}

KEY BENEFITS: ${feature.benefits.join(', ')}

TARGET PERSONA: ${feature.persona}

CURRENT PLAYBOOK:

${playbook.body}

Updates needed:

1. Add feature to "What We Offer" section

2. Create demo talking points

3. Add discovery questions that surface need for this feature

4. Update objection handlers if this addresses known concerns

`);

}

// Create training module for new feature

await generateTrainingModule(`New Feature: ${feature.name}`, {

audience: 'all-sales',

duration: 15,

prerequisites: ['product-fundamentals']

});

}

Content Quality Checklist

Have Codex self-check every piece of content:

async function validateContent(content, type) {

const validation = await enablementAgent.run(`

Validate this ${type} against our quality standards:

CONTENT:

${content}

CHECK FOR:

1. ✓ Specificity — Are claims backed by data/examples?

2. ✓ Accuracy — Do features/pricing match current product?

3. ✓ Actionability — Can a rep use this immediately?

4. ✓ Scannability — Is it easy to skim?

5. ✓ Completeness — Are all required sections present?

6. ✓ Freshness — Are examples and data current?

7. ✓ Voice — Does it match our brand tone?

Return:

{

"score": 0-100,

"issues": ["list of specific problems"],

"suggestions": ["how to fix each issue"],

"approved": true/false

}

`);

return JSON.parse(validation);

}

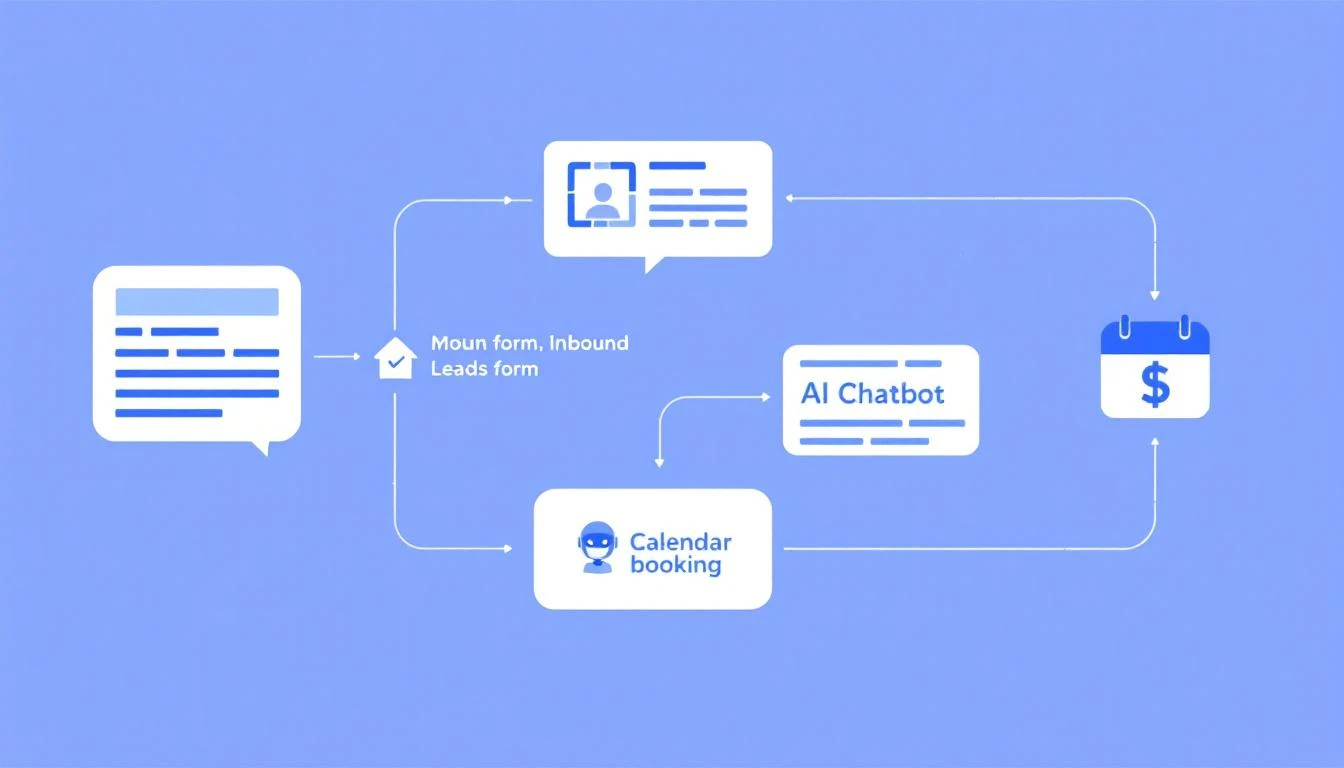

Integration with Enablement Stack

Seismic/Highspot Integration

Push generated content to your enablement platform:

async function publishToSeismic(content) {

const formatted = await enablementAgent.run(`

Format this content for Seismic upload:

${content}

- Add proper metadata tags

- Set appropriate permissions (sales-team)

- Include search keywords

- Add related content suggestions

`);

await seismic.content.create({

title: formatted.title,

body: formatted.body,

tags: formatted.tags,

permissions: ['sales-team'],

keywords: formatted.keywords

});

}

Slack Integration for Requests

Let reps request content updates directly:

// Slack command: /enablement-request

app.command('/enablement-request', async ({ command, ack, respond }) => {

await ack();

const request = command.text;

// Quick triage

const triage = await enablementAgent.run(`

Triage this enablement request:

"${request}"

Determine:

1. Content type needed (battle card, playbook, training, etc.)

2. Priority (urgent, normal, nice-to-have)

3. Can this be auto-generated or needs human input?

4. Estimated time to create

`);

if (triage.canAutoGenerate) {

const content = await generateContent(triage.contentType, request);

await respond(`✅ Created! Here's your ${triage.contentType}: ${content.url}`);

} else {

await createEnablementTicket(request, triage);

await respond(`📋 Request logged. Enablement team will review.`);

}

});

Measuring Impact

Track whether AI-generated content actually works:

| Metric | Before AI | After AI | Change |

|---|---|---|---|

| Battle cards accessed/month | 45 | 312 | +593% |

| Content freshness (avg age) | 4.2 months | 2.1 weeks | 8x fresher |

| Rep content requests | 28/month | 8/month | -71% |

| Time to create training | 40 hours | 4 hours | -90% |

| Enablement NPS | 34 | 71 | +37 pts |

Reps use content more when it's actually helpful and current. Shocking, I know.

Getting Started

- Audit current content — What's stale? What's missing?

- Prioritize — Start with battle cards (highest impact, clearest structure)

- Set up sources — Connect Codex to product docs, CRM, competitive intel

- Generate first batch — Create 3-5 battle cards manually to test quality

- Automate triggers — Set up auto-updates on competitor/product changes

- Measure and iterate — Track usage and gather rep feedback

Related Resources

- AI Objection Handling Battlecard Generator

- AI Sales Playbook Generator with Codex

- AI Sales Onboarding Automation with Claude

Try our AI Lead Generator — find verified LinkedIn leads for any company instantly. No signup required.

Scale Enablement Without Scaling Headcount

MarketBetter helps GTM teams work smarter with AI-powered automation. From battle cards to training modules, we help you keep reps equipped with what they need to win.

See how AI can transform your sales enablement.